JOURNALISTS FOR HUMAN RIGHTS: Why Ethical Reporting Matters More Than Ever in a World of Conflict

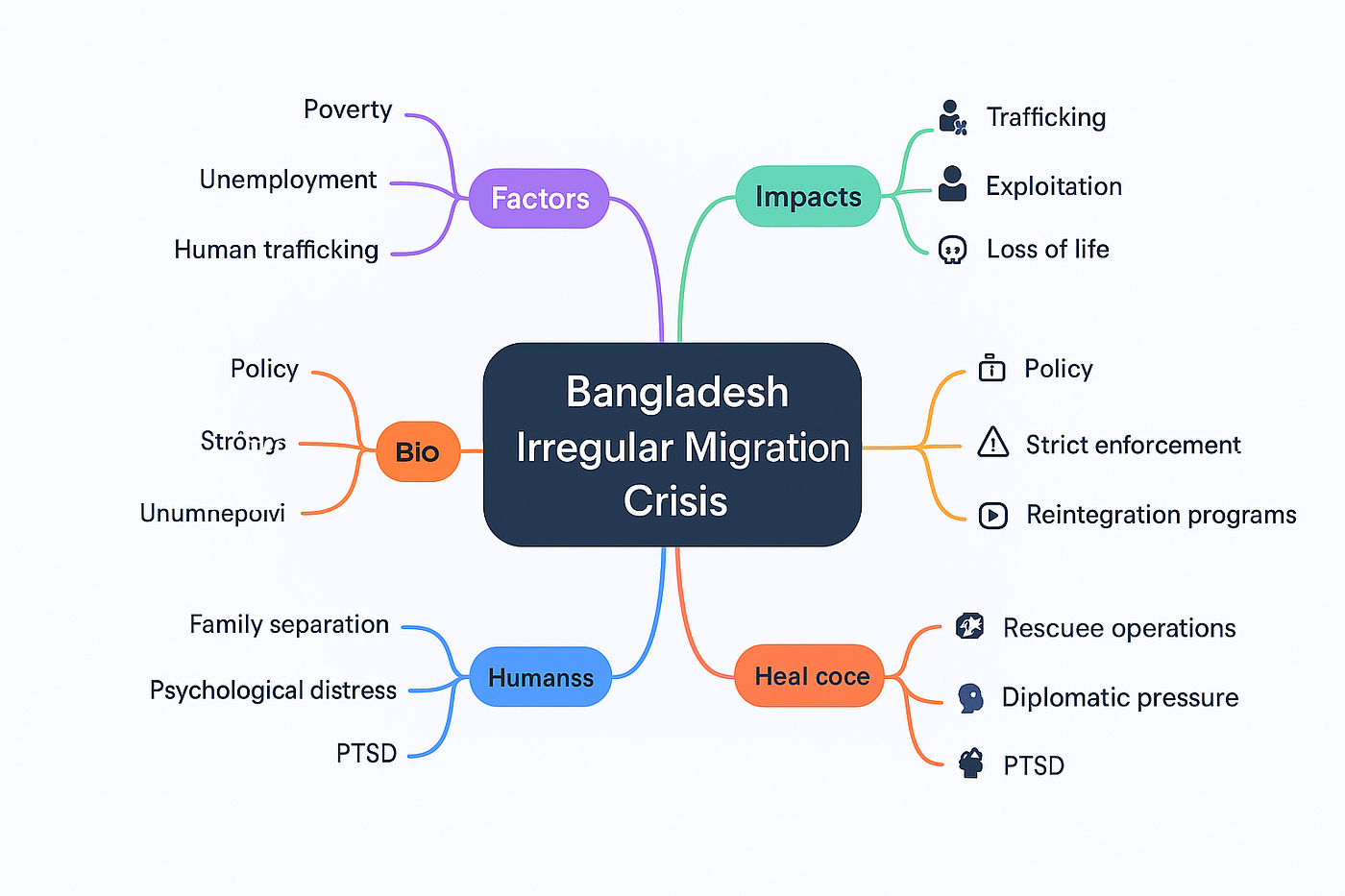

The Growing Challenge of Human Rights Reporting By Tuhin Sarwar In an era where wars are livestreamed, refugees are reduced to statistics, and misinformation spreads faster than verified facts, the…