From Replika to CarynAI: Exploring the $500B digital intimacy market, dopamine-driven AI addiction, and rising emotional dependency in Bangladesh

Adult AI Girlfriends and the evolution of romantic companion apps are reshaping emotional behavior, social interactions, and privacy dynamics among young people worldwide. Applications such as Replika, Xiaoice, Gatebox, and CarynAI are no longer just tools; they have become a $500 billion digital frontier for intimacy. In Bangladesh, while 96% of internet users regularly engage with AI platforms, a significant portion is transitioning to Adult AI Girlfriends for emotional support. Yet, most remain unaware of the profound emotional dependency, dopamine-driven addiction risks, and data privacy vulnerabilities identified in the Telenor Asia Digital Report 2025.

Across Dhaka, Chittagong, and other urban centers, surveys indicate that 42% of youth aged 18–30 engage with AI companionship applications for multiple hours weekly, often integrating them into late-night routines. Field observations reveal the prevalence of VR headsets and AI-driven chat interfaces in private residences, shared apartments, and co-working spaces, where youth spend several hours immersed in virtual emotional engagement. The experience is consistent: AI companions provide instant attention, emotional affirmation, and nonjudgmental conversation, creating a deeply immersive, psychologically resonant interaction.

These patterns are not isolated anecdotes but systematic behaviors observed across multiple urban cohorts, indicating a silent behavioral shift. Engagement is high not only for casual conversation but also for simulated romantic and intimate interactions, which are increasingly structured through premium subscriptions and immersive VR integration.

Context / Background

Globally, AI companionship applications have emerged as a response to growing emotional isolation, urbanization-induced loneliness, and relational complexity. Studies show that increased urban density, fragmentation of social networks, and reliance on digital communication contribute to anxiety and reduced interpersonal connectivity among youth (UNICEF Digital Safety 2025).

Market incentives amplify these trends. Platforms like Replika (United States), Xiaoice (China), Gatebox (Japan), and CarynAI (United States) monetize engagement via subscription tiers and microtransactions, transforming emotional gaps into structured consumer behavior Fortune Business Insights 2026

In Bangladesh, high mobile penetration and widespread internet access have accelerated adoption, with urban youth increasingly substituting offline social engagement with AI-mediated interaction. Surveys indicate that awareness of emotional dependency, psychological impact, and privacy risks remains low, creating a landscape ripe for unregulated use.

Human Story / Field Perspective

Aggregated field data demonstrates consistent patterns: youth use AI companions for emotional reassurance, romantic simulation, and social feedback loops. Interactions often include voice conversation, personalized responses, and immersive visual representation.

Field researchers observed:

- Extended engagement periods, with average weekly usage exceeding 15 hours among heavy users.

- Behavioral displacement, where users postpone real-life social interactions in favor of AI-mediated engagement.

- Emotional reliance, reflected in users attributing feelings of companionship and support to AI presence.

Local focus groups in Dhaka and Chittagong reveal nuanced perspectives: while AI companions reduce perceived loneliness temporarily, participants report reduced motivation for face-to-face interaction, academic distraction, and growing dependence on virtual affirmation (Telenor Asia Digital Report 2025).

Data & Analysis

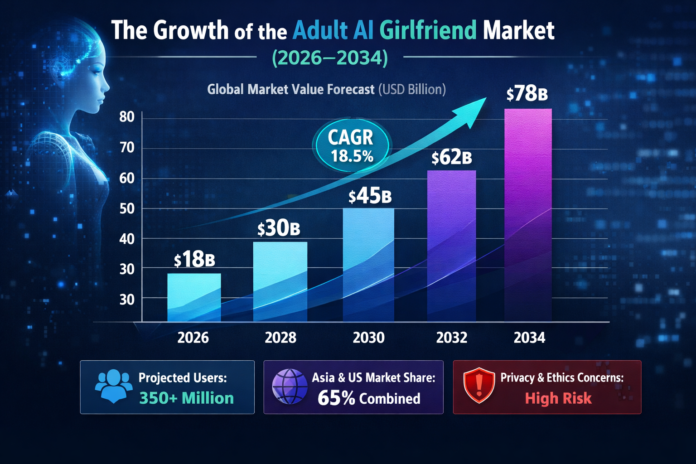

Market Size and Growth:

- 2025: USD 37.73 billion globally

- 2030 Projection: USD 140.75 billion

- 2034 Projection: USD 435.9 billion

- Compound Annual Growth Rate (CAGR): 31–34% (Grand View Research 2026)

Demographics:

- Age 18–30: predominant user base

- Single, urban youth: ~68%

- Romantic simulation: ~40% engagement

- Adult/NSFW modules: ~60% engagement (Fortune Business Insights 2026)

Technical Frameworks:

AI companionship applications implement tiered interaction models:

- Basic Access (Free) – limited text chat and avatar interactions.

- Pro Subscription – voice, video integration, limited NSFW modules.

- Ultra/Platinum – mood tracking, real-time emotional analysis, immersive VR avatars.

Psychological Dynamics:

- AI companions leverage dopamine reward loops, simulating social reciprocity.

- Longitudinal research indicates reduced social skills and increased perceived loneliness among high-frequency users (MIT Media Lab Study 2025).

- Harvard Business School research notes temporary relief from loneliness but elevated long-term dependency (Harvard Business School 2025).

- Johns Hopkins Center for Digital Ethics warns of mental health risks from deep immersion in virtual social environments (Johns Hopkins Public Health 2025).

Subscription Patterns:

67% of premium users report 15+ hours weekly engagement, reflecting behavioral patterns analogous to social media and gaming addiction (Replika User Data Survey 2025).

Institutional / Expert Voice

Psychologists and digital ethics scholars highlight risks:

- Emotional addiction: simulated intimacy may replace real-life connection.

- Virtual objectification: AI companions modeled as highly customizable, gendered avatars.

- Consent simulation: lack of accountability in adult modules.

Policy analysts observe that while China imposes strict content censorship, Western markets permit full NSFW engagement, creating divergent regulatory landscapes (AI Ethics Journal 2025).

In Bangladesh, experts stress the urgency for awareness programs, given high adoption and low regulation. Mental health professionals recommend integrating digital literacy, parental guidance, and psychological counseling to mitigate long-term dependency risks (UNICEF Digital Safety 2025).

Broader Impact

The AI companionship ecosystem affects youth across multiple dimensions:

- Social Behavior: prolonged AI engagement correlates with delayed social skill development and reduced offline interactions.

- Mental Health: immersive AI experiences provide temporary emotional relief but increase dependency and anxiety over time.

- Privacy: platforms collect vast behavioral data, which may be monetized or shared without user consent. Age verification loopholes allow underage access to adult content.

- Cultural Sensitivity: romantic and adult simulations clash with prevailing cultural and religious norms, particularly in Bangladesh.

Global observations indicate that urban youth are integrating AI companionship into daily routines, effectively creating a silent behavioral revolution in emotional relationships (MIT Media Lab Study 2025; Fortune Business Insights 2026).

Policy & Safety Discussion

Recommendations from international agencies and research institutions include:

- Mandatory age verification for adult content modules.

- Clear NSFW labeling and transparency in algorithmic design.

- Data minimization policies to safeguard user privacy.

- Certification standards for emotional AI, ensuring safe engagement and limiting addictive reinforcement loops.

Parental and educational guidance is critical:

- Monitoring usage and subscriptions, observing behavioral changes.

- Open discussions about simulation versus reality.

- Awareness workshops addressing psychological, social, and privacy implications (UNESCO Policy Brief 2025).

Closing Narrative

AI companionship applications are no longer a peripheral technology trend. They represent a global, silent transformation in how youth experience emotional intimacy, affecting social interaction, mental health, and personal identity. In Bangladesh, rapid adoption underscores the urgent need for policy, education, and parental oversight.

While these platforms provide emotional engagement, they cannot substitute authentic human relationships. Extended immersion in AI-mediated social simulation risks creating a generation more comfortable with virtual companionship than real-life interaction, raising questions about social cohesion, psychological well-being, and digital ethics.

Machines may simulate intimacy, but the heart remains human.

Parents, educators, and policymakers, immediate action is essential.